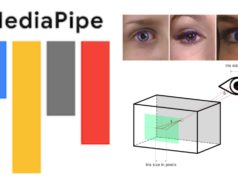

In March, Google introduced MediaPipe Objectron, a collection of mobile-centric real-time 3D object detection models. It was trained on a real-world 3D dataset with fully-annotated images. It was capable of predicting 3D bounding boxes of any objects. Bounding box is a 3D box with vertical and horizontal lines carved around an object in augmented reality in simple terms. Machine Learning has made exceptional advancements in achieving the highest level of sophistication when trained solely on photos.

Machine learning is a branch of computer science that focuses on building programs capable of learning from data, identifying patterns, and making decisions based on data. It is essential to keep track of your data model to make sure that it gives good recommendations and predictions.

This can be a time-consuming process, but it is vital to keep accurate records of your data models. With model monitoring, machine learning can help reduce the time and resources needed to monitor and update models.

Monitoring models allows real-time checks of the accuracy of models and can be used to implement adjustments and improvements. That is why it is vital to keep an eye on your data model.

Google announces Objectron Dataset

On November 9, Google released its next-gen Objectron Dataset that uses a trove of short, object-centric video clips. The dataset captures a plethora of dataset of common objects from different angles. According to the statement made by Google, each video clip enlisted on Objectron dataset arrives with AR session metadata which can provide additional information. This includes sparse 3D point-clouds and different camera poses.

The researchers at Google manually annotated the bounding boxes around the objects manually. It further carries information related to the object’s orientation, position, and dimensions. Google has shipped a dataset of 15,000 manually annotated video clips with Objectron Dataset. A trove of 4 million annotated images are present in the dataset. Google used a geo-diverse sample with dataset from 10 countries spread across five continents.

3D Object Detection Model

Google is also sharing the 3D object detection solution for mugs, chairs, shoes, and cameras with it. All the models are released on Google’s MediaPipe Objectron which in-turn is an open-source framework for ML solutions on a cross-platform. The dataset released recently has a two-stage architecture with TensorFlow Object Detection model as the first stage. It locates a 2D crop of any object which is then fed to the second stage. The model then uses the crop predict a 3D bounding box around the object. The system repeats the process for the next frame in a video.

The evaluation metrics that the 3D Object Detection uses 3D intersection over union (IoU). Here, an algorithm computes 3D IoU values of any 3D-oriented boxes. The 3D detection solution finds intersection points between faces of two boxes using Sutherland-Hodgman Polygon clipping algorithm. The volume of the intersection is calculated using the convex hull of the clipped polygons. Next up, IoU is a computer from the union of two boxes and the volume of the intersection.

Google has already uploaded all the details related to the new Objectron dataset on its website. It also includes datasets for varied categories of objects. The format includes video sequences, annotation labels with 3D bounding boxes, and AR metadata. It further carries data on processed datasets, scripts to run the evaluation, and finally, scripts to load data in Jax, PyTorch, and TensorFlow in order to visualize the dataset.